HIPAA 2026: the rule changes your healthtech startup is not prepared for

For 23 years, the HIPAA Security Rule has given organizations a quiet escape hatch. Certain security controls were labeled “addressable,” which meant you could assess whether they were reasonable for your situation and, if you decided they were not, document your reasoning and move on.

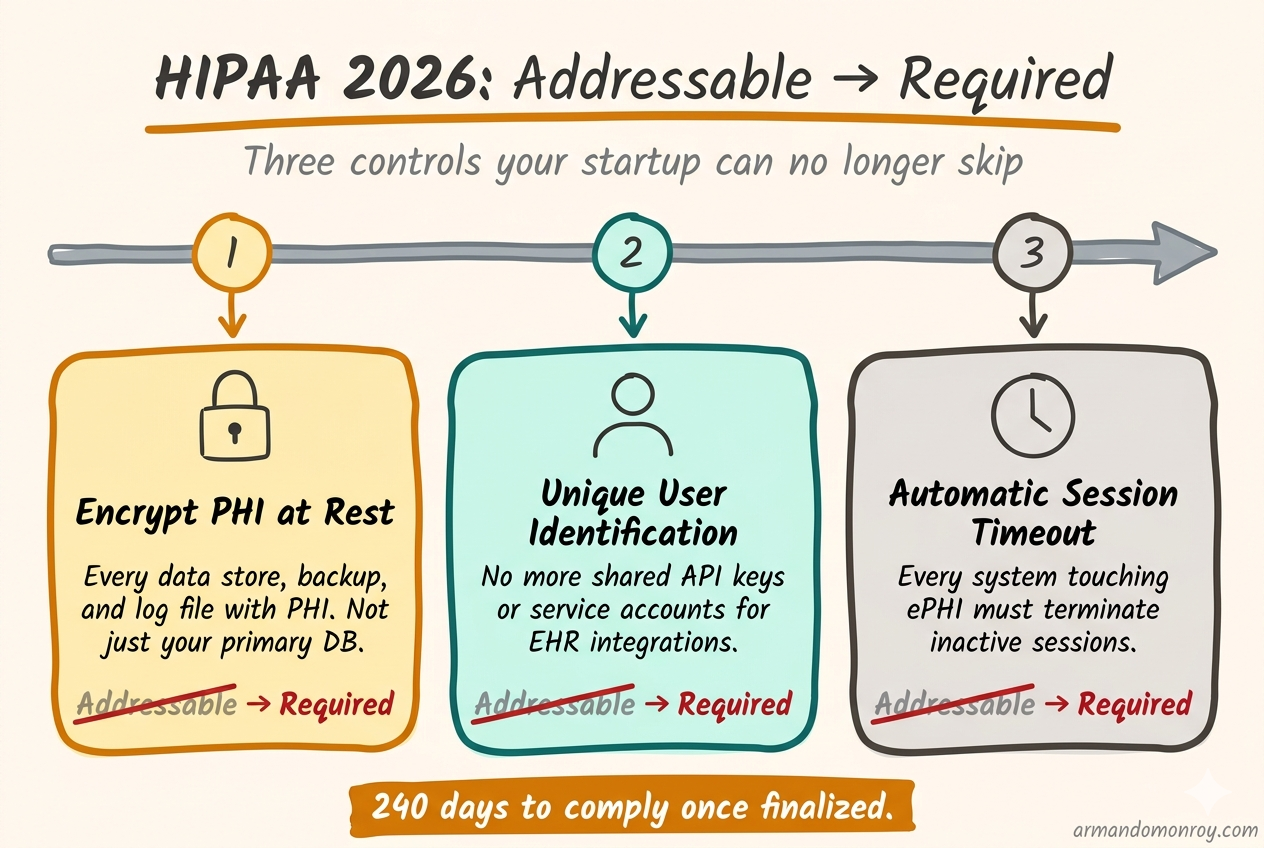

Encryption at rest? Addressable. You could skip it if you wrote down why. Unique user identification for every system touching protected health information (PHI)? Addressable. Automatic session timeouts? Also addressable.

In December 2024, the Office for Civil Rights (OCR) at the Department of Health and Human Services proposed a rule that closes that escape hatch entirely. Every addressable specification becomes required. No more documented exceptions. No more “we determined this was not reasonable for our environment.”

If you are building healthtech and handling PHI, this is the biggest regulatory change since the original Security Rule took effect in 2005.

What “addressable” actually meant, and why it mattered

The distinction between required and addressable was written into the HIPAA Security Rule in 2003. Required meant mandatory, no exceptions. Addressable meant you had to evaluate whether the control was reasonable and appropriate for your organization. If you determined it was not, you had two options: implement an equivalent alternative measure, or document why neither the specification nor an alternative was necessary.

That second option is the one that mattered. In practice, “addressable” became shorthand for “optional if you write a paragraph explaining why.” I have reviewed compliance programs at dozens of healthtech startups, and the pattern is consistent. Somewhere in a Google Doc or a Notion page, there is a paragraph that says something like: “We have determined that encryption of PHI at rest is not required at this time because our cloud infrastructure provider manages the underlying storage.”

The reasoning is often thin. But it satisfied the letter of the rule, and for 20 years, it was enough. Startups could check the box and focus on shipping product.

The proposed rule changes that. If it is finalized, every implementation specification becomes required. The only exceptions are narrow and specific, not the broad organizational discretion that “addressable” allowed.

Where things stand right now

The Notice of Proposed Rulemaking hit the Federal Register on January 6, 2025. The comment period closed March 7, 2025, with OCR receiving roughly 4,700 responses. Many of them were critical. A coalition of more than 100 hospital systems and provider associations petitioned HHS to withdraw the proposal entirely.

Despite that pushback, the rule remains on OCR’s regulatory agenda for finalization in May 2026. We are two months away from that target.

There is real uncertainty about what happens next. The NPRM was published in the last days of the Biden administration, and the Trump administration’s deregulatory stance has led some to expect the rule will be shelved. But healthcare cybersecurity has had bipartisan support for years, and the string of massive healthcare data breaches in 2023 and 2024 created political pressure to act. A final rule may emerge in a slimmed-down form, but the core change, eliminating the addressable/required distinction, has broad support even among critics.

If you are a healthtech CTO, the strategic question is not “will this exact rule be finalized?” The question is “should I build my compliance program around the assumption that these controls become mandatory?” And the answer to that is yes, because your enterprise buyers already require most of them.

The specific changes that matter for healthtech startups

I am going to walk through the proposed requirements that will hit healthtech startups hardest. These are the controls that most startups have skipped or half-implemented because they were addressable.

Encryption everywhere, no exceptions

Under the current rule, encryption of PHI at rest is addressable. Many startups rely on cloud provider defaults and consider the matter handled. AWS S3 encrypts objects by default now. RDS supports encryption at rest. Good enough, right?

The proposed rule makes encryption mandatory for all ePHI, whether it is structured, semi-structured, or unstructured. Every data store, every backup, every log file that contains PHI needs to be encrypted. Not just the primary database. The staging environment. The analytics pipeline. The CSV export that an engineer downloads to debug a data issue.

If you are running on AWS or GCP with reasonable defaults, you are probably closer than you think on your primary data stores. The gaps are usually in the places people forget about: local development environments, CI/CD artifacts, log aggregation services, and third-party tools that ingest PHI.

The end of shared service accounts

The proposed rule requires unique user identification for every person accessing systems that contain ePHI. This was addressable, and startups routinely used shared credentials for system-to-system connections.

This is where it gets painful for healthtech specifically. If your product integrates with a customer’s EHR (Electronic Health Record) system, there is a good chance you are using a shared API key or a single service account to authenticate that connection. One set of credentials, used by your application, with no way to attribute individual access to PHI.

The proposed rule requires that you can trace every action on ePHI back to an individual user. Per-user authentication, audit logging that captures who did what, and access reviews that examine individual permissions. A single service account that your entire backend uses to pull patient data from an EHR does not meet that standard.

This is not a quick configuration change. For many startups, it means rearchitecting how their clinical integrations work. Start scoping that work now.

Multi-factor authentication for everything

Multi-factor authentication (MFA) was addressable under the current rule, and the proposed rule makes it mandatory for all access to systems containing ePHI. Not just remote access. All access. That includes employees logging into internal tools on the office network and engineers accessing production systems from a VPN.

Most startups already have MFA for their cloud console and their identity provider. The gaps are usually in internal tools, CI/CD pipelines, and database access. If your engineers can SSH into a production server or query a production database without MFA, that is a gap.

Patch management with a clock on it

The proposed rule introduces explicit timelines for patching known vulnerabilities. Critical vulnerabilities must be patched within 15 days. High-risk vulnerabilities get 30 days.

For a startup running a modern cloud-native stack, this sounds manageable until you think about it. Fifteen days for a critical vulnerability means you need a process that detects the vulnerability, assesses its criticality for your environment, tests the patch, deploys it, and verifies it worked, all within two weeks. If that process does not exist today, and at most early-stage startups it does not, building it takes time.

Vulnerability scanning and penetration testing

Vulnerability scanning at least every six months. Penetration testing at least annually. Both performed by qualified professionals, with written documentation of findings and corrective actions. Rescans required after significant system changes.

Many startups skip penetration testing until a prospect demands it. Under the proposed rule, it becomes a standing requirement. Budget $10,000 to $30,000 annually, depending on scope.

Network segmentation

The proposed rule explicitly requires network segmentation. If your application, your internal tools, and your development environment all sit in the same VPC with no segmentation between them, a compromise in one area gives an attacker access to everything.

This sounds straightforward, but it requires real engineering work. Segmenting a network that was built flat means redesigning security groups, setting up private subnets, potentially introducing a service mesh, and testing that nothing breaks when you restrict traffic that used to flow freely.

Technology asset inventory and network mapping

Every system, application, and device that can access ePHI must be inventoried. You must maintain a network map showing how ePHI moves through your systems. Both must be updated at least annually and whenever your environment changes.

This is not technically difficult. It is operationally tedious, and it is the kind of documentation that early-stage startups never create until someone asks for it. Start a spreadsheet now. It takes a few hours the first time and saves you days when an auditor or a prospect requests it.

The 72-hour recovery requirement

If a cyber incident takes your systems down, the proposed rule requires that you restore critical systems and ePHI within 72 hours. You need to know which systems are critical, have tested backup and restoration procedures, and have confidence that your recovery time objective is actually achievable.

Most startups have backups. Few have tested whether they can actually restore from them under pressure, in a defined timeframe, to a known good state.

The business associate verification change

This one deserves its own section because it changes the relationship between healthtech startups and their covered entity customers in a way that nothing else in the rule does.

Under the current rule, covered entities sign Business Associate Agreements (BAAs) with their vendors, and that is largely the end of the oversight obligation. HHS has historically said that covered entities are not required to actively monitor whether their business associates are actually implementing the controls they agreed to.

The proposed rule changes that. Covered entities would need to obtain annual written verification from each business associate confirming that they have deployed the required technical controls. That verification must be supported by a written analysis from a cybersecurity subject matter expert. And a person of authority at the business associate must certify that the analysis is accurate.

If you are a healthtech startup selling to health systems, this means your customers will start asking for annual proof. Not just a signed BAA, but documented evidence validated by a security expert, that you are actually doing what you said you would do. Think of it as a lighter version of an annual audit, but initiated by your customer rather than a formal audit firm.

If you already have SOC 2 Type II, you are in good shape. The SOC 2 report provides much of the evidence a covered entity would need. If you do not have SOC 2, this new verification requirement is another reason to get it done.

The 240-day clock

If the rule is finalized in May 2026, the math works like this. Sixty days for the rule to become effective, plus 180 days to achieve compliance. That puts the compliance deadline somewhere around January or February 2027.

That sounds like plenty of time until you list the work. Encrypting all data stores. Rearchitecting service account patterns. Implementing network segmentation. Building a patch management process with 15-day SLAs. Setting up vulnerability scanning. Scheduling a penetration test. Creating and testing a 72-hour recovery plan. Documenting an asset inventory and network map. Establishing an annual compliance audit process.

For a startup with five engineers and no dedicated security person, that is not a 180-day project. It is a “start now and work steadily” project.

What to do right now

I am going to be direct about the prioritization. Not every change in the proposed rule carries the same weight, and you cannot do everything at once.

This week, take inventory of your encryption posture. List every data store, backup location, and log aggregation service that touches PHI. Note which ones are encrypted at rest and which are not. This gives you a gap list and a project plan.

This month, audit your service accounts and shared credentials. Every system-to-system connection that touches PHI needs a plan to move to per-user or per-service authentication with proper attribution. This is the change with the longest lead time because it often requires integration work with your customers’ EHR systems.

In the next 60 days, if you do not have a formal patch management process, build one. Subscribe to vulnerability feeds for your stack. Define your SLAs: 15 days for critical, 30 days for high. Track it somewhere your team will actually look at it.

In the next 90 days, engage a penetration testing firm. The wait times for qualified firms can be 4-8 weeks, so booking early matters. Budget $10,000-$30,000 depending on scope.

Ongoing: start the asset inventory and network map. It is boring and it takes time, but it is foundational for everything else. If you cannot list the systems that touch PHI, you cannot secure them.

Why this is actually good for healthtech startups

I will end with a take that might be unpopular with the people arguing for withdrawal of the proposed rule.

The addressable/required distinction created a false sense of flexibility. CTOs at healthtech startups would document that encryption at rest was not necessary and feel compliant. Then a health system’s procurement team would reject them anyway, because the health system’s own security policies required it regardless of what HIPAA technically demanded.

The new rule aligns regulatory requirements with what enterprise buyers already expect. If you are selling to health systems today, your buyers are already asking about encryption at rest, MFA, penetration testing, and incident response plans. The proposed rule does not add new expectations. It catches the regulation up to the market.

Fewer ambiguities in the rule means fewer surprises in procurement. And fewer surprises in procurement means deals close faster. That is not a bad outcome for a startup trying to land enterprise healthcare contracts.

The compliance work is real. The timeline is tight. But if you have been building your security program to satisfy your customers rather than the minimum regulatory bar, you are closer to audit-ready than you might think.